At the Lawrence Livermore National Laboratory in California, a supercomputer named “Sequoia” puts nearly every other computer on the planet to shame. With 1.6 million processor cores (16 per CPU) across 96 racks, Sequoia can perform 16 thousand trillion calculations per second, or 16.32 petaflops.

Who would need such horsepower? The IBM Blue Gene/Q-based system was built for the Department of Energy for simulations designed to extend the lifespan of nuclear weapons. But for a limited time, the machine is being made available to outside researchers to perform all sorts of tests, a few hours at a time.

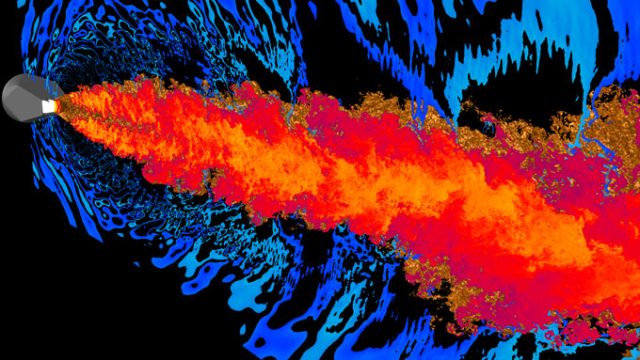

One of the first to take advantage of this opportunity was Stanford University’s Center for Turbulence Research—and it wasn’t hesitant about seeing what this machine is really capable of. For three hours on Tuesday of last week, researchers from the center remotely logged in to Sequoia to run a computational fluid dynamics (CFD) simulation on a million cores at once—1,048,576 cores, to be exact.

Read 27 remaining paragraphs | Comments

from Ars Technica