https://arstechnica.com/?p=1476009

Microsoft announced DirectX raytracing a year ago, promising to bring hardware-accelerated raytraced graphics to PC gaming. In August, Nvidia announced its RTX 2080 and 2080Ti, a pair of new video cards with the company’s new Turing RTX processors. In addition to the regular graphics-processing hardware, these new chips included two extra sets of additional cores, one set designed for running machine-learning algorithms and the other for computing raytraced graphics. These cards were the first, and currently only, cards to support DirectX Raytracing (DXR).

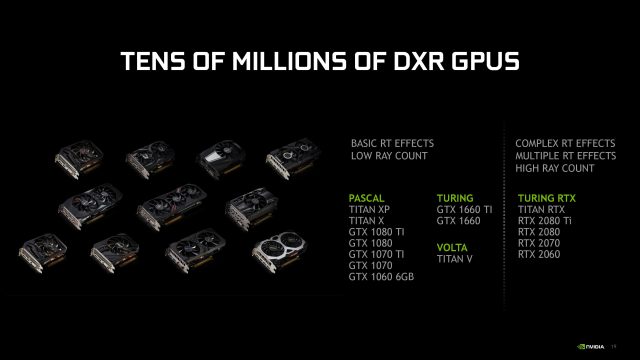

That’s going to change in April, as Nvidia has announced that 10-series and 16-series cards will be getting some amount of raytracing support with next month’s driver update. Specifically, we’re talking about 10-series cards built with Pascal chips (that’s the 1060 6GB or higher), Titan-branded cards with Pascal or Volta chips (the Titan X, XP, and V), and 16-series cards with Turing chips (Turing, in contrast to the Turing RTX, lacks the extra cores for raytracing and machine learning).

The GTX 1060 6GB and above should start supporting DXR with next month’s Nvidia driver update.

Nvidia

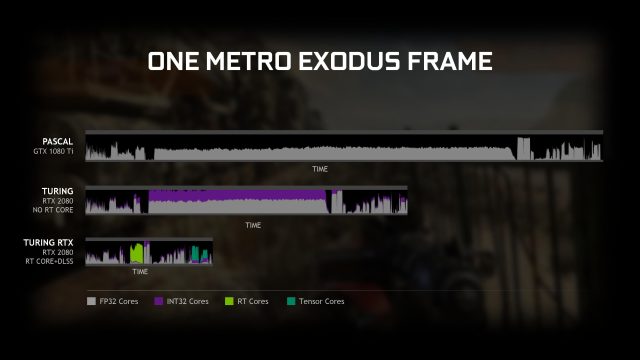

Unsurprisingly, the performance of these cards will not match that of the RTX chips. RTX chips use both their raytracing cores and their machine-learning cores for DXR graphics. To achieve a suitable level of performance, the raytracing simulates relatively few light rays and uses machine-learning-based antialiasing to flesh out the raytraced images. Absent the dedicated hardware, DXR on the GTX chips will use 32-bit integer operations on the CUDA cores already used for computation and shader workloads.

Nvidia says that Turing and Pascal cards will take two to three times longer, respectively, to render each frame than a Turing RTX card. This difference is particularly acute on Pascal cards. In Turing, the 32-bit integer workload used for raytracing can run concurrently with the 32-bit floating-point workload used for other graphical tasks. That’s not the case on Pascal, where the workloads will have to be run consecutively.

This weaker performance means that Nvidia recommends that developers use only simpler raytracing effects on the older chips. On the RTX parts, the raytracing performance can be good enough to enable global illumination—a form of raytracing that enables indirect lighting from reflections, in addition to the usual direct lighting from light sources—but on the GTX parts, Nvidia recommends the use of simpler tasks such as material-specific reflections.

With the RT cores for raytracing and the tensor cores for machine-learning algorithms, the Turing RTX (bottom graph) can process a frame with raytracing relatively quickly. The Turing (middle graph) doesn’t have the dedicated cores, but it can still run the integer workload simultaneously with its floating-point workload for a total frame time of about double the RTX. The Pascal (top graph) has to run the integer and floating-point tasks back to back, so it takes much longer than the Turing, let alone the Turing RTX.

Nvidia

That raytracing can be performed on these older chips isn’t a big surprise. During DXR’s development, Microsoft used a compute-shader-based raytracing algorithm, so clearly the dedicated hardware isn’t necessary. However, the substantial performance difference shows that the dedicated hardware is going to be important, at least for now.

Existing games that use DXR should automatically start using raytracing on the 10-series and 16-series cards once the drivers are updated, without requiring any game updates. However, given the performance differential, we would imagine that developers will want to tailor their raytracing to the older hardware. The burden imposed by raytracing on the RTX chips is substantial, and it’s simply not going to be practical to try to use the same level of raytracing quality on the older cards.

via Ars Technica https://arstechnica.com

March 19, 2019 at 04:36PM